STAR-CD 4.02 works out of the box; there are currently some warnings ERROR: ld.so: object 'libmpi.so' from LD_PRELOAD cannot be preloaded: ignored. As these messages seem to be uncritical, I’m not sure if I’ll further debug their cause.

STAR-CD 3.26 also works out of the box.

User subroutines are not yet tested; some additional steps will be required to get them compiled as the required PGI compiler is not installed locally…

In first tests, star -chkpnt failed with the message TAR checkpoint failed due to invalid "star.pst" file. or TAR checkpoint failed due to invalid "star.ccm" file.

=====================================================================

Using STAR-CD in principle works as follows (access to the STAR-CD module is restricted by ACLs)

- prepare your input files on your local machine; the RRZE systems are not supposed for interactive work.

If you have to use the RRZE systems for some reason for pre/postprocessing, do not start prostar, etc. on the login nodes but submit an interactive batch job using qsub -I -X -lnodes=1:ppn=4,walltime=1:00:00!

- transfer all input files to the local filesystem on the Woody cluster using SSH (scp/sftp), i.e. copy them to

/home/woody1/.../.../...

- Use a batch file as follows:

[shell]

#!/bin/bash -l

# DO NOT USE #!/bin/sh in the line above as module would not work; also the “-l” is required!

#PBS -l nodes=2:ppn=4

#PBS -l walltime=24:00:00

#PBS -N STARCD-woody

#… any other PBS option you like

# let’s go to the directory where the script was submitted

cd $PBS_O_WORKDIR

# load the STAR-CD module; either “star-cd/3.26_64bit” or “star-cd/4.02_64bit”

module add star-cd/3.26_64bit

# here we go

star -dp `cat $PBS_NODEFILE`

[/shell]

- submit your job to the PBS batch system using

qsub

- wait until the job finished

- transfer the required result files to your local PC, analyze the results locally (using your fast graphics card)

- delete all files you no longer need from the RRZE system as disk space is still valuable

Some more details on “ERROR: ld.so: object ‘libmpi.so’ from LD_PRELOAD cannot be preloaded: ignored” message: Looking into the script which is actually used to call the STAR-CD binary, I can guess where the message might come from. They use something like $HPMPI/bin/mpirun ... -e LD_PRELOAD=libmpi$PNP_DSO ... -f .starboot.mpi, i.e. they do not specify a path for the library to be preloaded. I’m not sure what the current policy of ld.so from glibc is (does it look at the current LD_LIBRARY_PATH – if set) or does it only look at “secure” (predefined) directories. If the latter is the case, star of course cannot find the library …

Problems with STAR-CD-4.02 (64-bit) and Infibiband: Running the PGI variant of STAR-CD-4.02 (64-bit) over Infiniband (i.e. using HP-MPI) currently may fail on our new Woody-cluster as well as on the Infiniband partition of the Transtec-cluster. The observations (currently based on just a signle testcase) are as follows:

- STAR-CD-4.02/PGI using HP-MPI/VPAI runs fine for a few iterations but then suddenly stops to consume CPU time on most of the nodes.

- STAR-CD-4.02/PGI using HP-MPI/TCP or mpich runs fine.

- STAR-CD-4.02/Absoft runs fine even with HP-MPI/VAPI!

Further tests are currently on their way …

For the moment, module add star-cd/4.02_64bit; star -mpi=hp -mppflags="-v -prot -TCP" is the recommended way of starting STAR-CD-4.02.

Sometimes also problems of STAR-CD 3.26 with Infiniband: According to a user report, also STAR-CD 3.26 with HP-MPI over Infiniband has the problem that it suddenly stops to run. It seems the the AMG-preconditioner is the reason for the problems.

So, check if Infiniband runs fine for your cases, if not (and only if not) add -mpi=hp -mppflags="-v -prot -TCP"

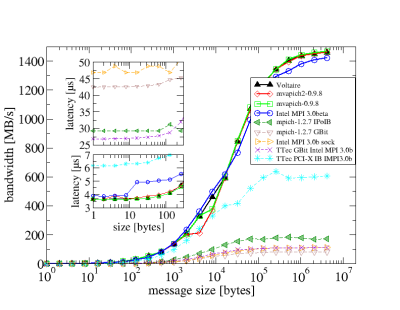

Upgraded IB stack seems to solve Infiniband problems: Updating from the Voltaire ibhost-stack to the Voltaire GridStack 4.1 (which is OFED-1.1 based) seems to have solved the issue with hanging STAR-CD processes. Please try to run without the argument -TCP!

As a technical note: the HP-MPI version which comes with STAR-CD 4.02 or 3.26 is too old to work with OFED; thus, the latest HP-MPI (i.e. 2.02.05) has been installed on Woody. The module files for STAR-CD have been adapted to automatically use this updated version. Your output (if -v -prot is used) should now show IBV instead of VAPI if the high speed network is used.

Using IPoverIB as fallback: If native Infiniband does not work even with the upgraded IB stack (e.g. due to a bug in connection with AMG), try IP-over-IB by using -mppflags="-v -prot -TCP -netaddr 10.188.84.0/255.255.254.0".

Another option available in HP-MPI (which is completely unrelated to the communication network but I’m to lazy to create an other thread right now) is the ability to pin processes to specific CPUs of a node. On woody, -mppflags="... -cpu_bind=v,map_cpu:0,1,2,3 ..." is the right choice if you run with 4 CPUs per node; if you have huge memory requirements and thus only use every second core, the correct option would be -mppflags="... -cpu_bind=v,map_cpu:0,1 ...".