(See the prelude for some general information on what this is all about)

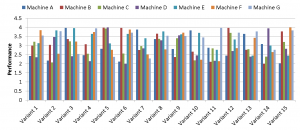

Figure 1: Lots of variants of an experiment, lots of machines, no idea for interpretation – why not show it all?

Computers produce data. Parallel computers produce more data. And doing performance experiments with parallel computers produces even more data, since we often conduct several runs with different code variants on different machines. Now imagine a situation where you’ve got all this data lying around on your disk, but you can’t make any sense of it. Since you wouldn’t bother to establish even a coarse performance model (your setup is just too complex for this kind of bean counting), you don’t know whether some particular performance measurement is “good” or “bad” on some particular machine, or how performance should change when some parameter is altered.

Why not put the cart before the horse and show all the data? This has decisive advantages: You can display all the work you did, without the embarrassing message that five or six numbers is all the relevant data you got from your research. Furthermore, it gives you the opportunity to add “discussion” at any desirable level of detail. For instance, if the page limit for submitting to your favorite conference is ten pages, and you have five pages full of messy bar graphs (such as the one shown in Fig. 1), you can easily fill the rest with meaningless “explanations” like “Variant 11 shows about 90% performance advantage on Machine G over Variant 10 on Machine F while, surprisingly, Variant 9 on Machine A is 6% slower than Variant 7 on Machine C.” You may want to write “92.23%” and “5.982%” here – see Stunt 8 for the reason.

In Summary, you can make up for your lack of insight by showing data. Plenty of it. Swamp your readers or your audience with hundreds of colorful bar graphs, scatter plots, timing diagrams, or whatever your favorite data visualization tool has to offer, and describe them at length. If you really want to leave the impression of true understanding, resort to obscure technical details − but this is the theme of another stunt.