On October 14, 2021 I gave an invited online talk at Stony Brook University‘s Institute for Advanced Computational Science (IACS). I talked about white/gray-box approaches to performance modeling and how they can fail in interesting ways on highly parallel systems because of desynchronization effects. The slides and a video recording are now available:

On October 14, 2021 I gave an invited online talk at Stony Brook University‘s Institute for Advanced Computational Science (IACS). I talked about white/gray-box approaches to performance modeling and how they can fail in interesting ways on highly parallel systems because of desynchronization effects. The slides and a video recording are now available:

Title: From numbers to insight via performance models

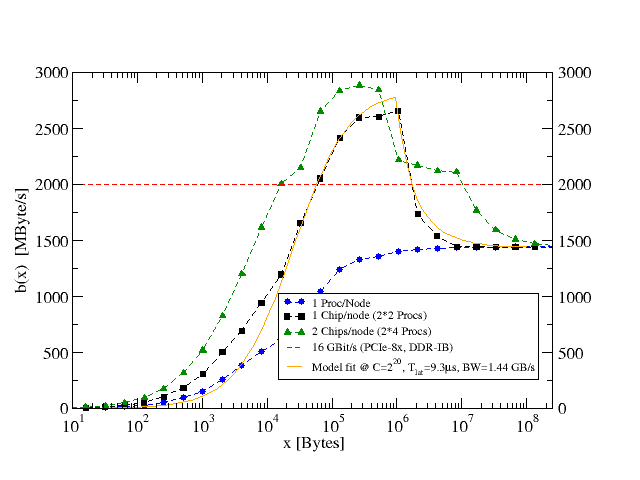

Abstract: High-performance parallel computers are complex systems. There seems to be a general consensus among developers that the performance of application programs is to be taken for granted, and that it cannot really be understood in terms of simple rules and models. This talk is about using analytic performance models to make sense of performance numbers. By means of examples from computational science, I will motivate that it makes a lot of sense to try and set up performance models even if their accuracy is sometimes limited. In fact, it is when a model yields false predictions that we learn more about the problem because our assumptions are challenged. I will start with a general categorization of performance models and then turn to ECM and Roofline models for loop-based code on multicore CPUs. Going beyond the compute node level and adding communication models to the mix, I will show how stacking models on top of each other may not work as intended but instead open new insights and a fresh view on how massively parallel code is executed.