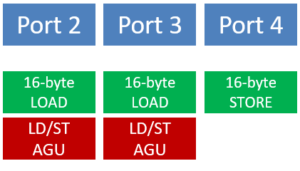

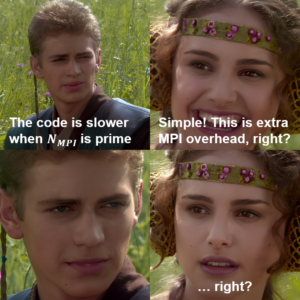

At the International Parallel and Distributed Processing Symposium (IPDPS) 2024 next week in San Fancisco, CA, our PhD student Jan Laukemann will present a paper that came out of a collaboration between NHR@FAU and Brookhaven National Laboratory (BNL): “CloverLeaf on Intel Multi-Core CPUs: A Case Study in Write-Allocate Evasion” investigates a curious effect when benchmarking the MPI-only version of the CloverLeaf proxy application, which is part of the SPEChpc 2021 benchmark suite: When scaling this code across the cores of an Intel Xeon Ice Lake or Sapphire Rapids node, we observed peculiar breakdowns in performance when the number of processes is prime. Being aware that we might just have discovered the most horribly expensive way to calculate prime numbers, we looked into the details with meticulous measurements and performance models. We came to the conclusion that this was neither caused by excessive MPI communication nor breaking layer conditions but by a new feature of Intel CPUs since Ice Lake: a write-allocate evasion mechanism called “SpecI2M.” SpecI2M is supposed to eliminate the write-allocate transfers from memory initiated by write misses if the cache line will be completely overwritten anyway (in which case the write-allocate is just overhead). As it turns out, SpecI2M is especially ineffective when the number of MPI processes is prime. To discover why, visit Jan’s talk (if you happen to be in San Francisco) or take a look at the paper:

At the International Parallel and Distributed Processing Symposium (IPDPS) 2024 next week in San Fancisco, CA, our PhD student Jan Laukemann will present a paper that came out of a collaboration between NHR@FAU and Brookhaven National Laboratory (BNL): “CloverLeaf on Intel Multi-Core CPUs: A Case Study in Write-Allocate Evasion” investigates a curious effect when benchmarking the MPI-only version of the CloverLeaf proxy application, which is part of the SPEChpc 2021 benchmark suite: When scaling this code across the cores of an Intel Xeon Ice Lake or Sapphire Rapids node, we observed peculiar breakdowns in performance when the number of processes is prime. Being aware that we might just have discovered the most horribly expensive way to calculate prime numbers, we looked into the details with meticulous measurements and performance models. We came to the conclusion that this was neither caused by excessive MPI communication nor breaking layer conditions but by a new feature of Intel CPUs since Ice Lake: a write-allocate evasion mechanism called “SpecI2M.” SpecI2M is supposed to eliminate the write-allocate transfers from memory initiated by write misses if the cache line will be completely overwritten anyway (in which case the write-allocate is just overhead). As it turns out, SpecI2M is especially ineffective when the number of MPI processes is prime. To discover why, visit Jan’s talk (if you happen to be in San Francisco) or take a look at the paper:

- J. Laukemann, T. Gruber, G. Hager, D. Oryspayev, and G. Wellein: CloverLeaf on Intel Multi-Core CPUs: A Case Study in Write-Allocate Evasion. Accepted for publication at IPDPS 2024, the 38th IEEE International Parallel & Distributed Processing Symposium. Preprint: arXiv:2311.04797

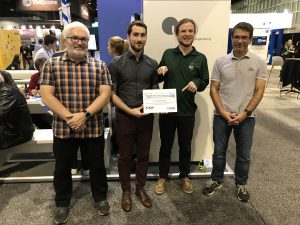

Along the way, we provided the first comprehensive, predictive Roofline model for the hot-spot loops in CloverLeaf, which helped a lot in figuring out what was actually going on. To our great delight, the paper is one of four best-paper candidates at the conference!

On Sunday, May 12, the brand-new tutorial “

On Sunday, May 12, the brand-new tutorial “