Dominik and his PPAM Best Poster Award

Our paper “Performance Engineering for a Tall & Skinny Matrix Multiplication Kernel on GPUs” by Dominik Ernst, Georg Hager, Jonas Thies, and Gerhard Wellein just received the best workshop paper award at the PPAM 2019, the 13th International Conference on Parallel Processing and Applied Mathematics, in Bialystok, Poland. In this paper, Dominik investigated different methods to optimize an important but often neglected computational kernel: the multiplication of two extremely non-square matrices, with millions of rows but very few (tens of) columns. Vendor libraries still do not perform well in this situation, so you have to roll your own implementation, which is a daunting task because of the huge optimization parameter space, especially on GPGPUs.

The optimizations were guided by the Roofline model (hence “Performance Engineering”), which provides an upper limit for the performance of the kernel. On an Nvidia V100 GPGPU, Dominik’s solution achieves more than 90% of the maximum performance for matrices with up to 40 columns, and more than 50% at up to 64 columns. This is significantly faster than vendor libraries at the time of writing.

The work was funded by the project ESSEX (Equipping Sparse Solvers for Exascale) within the DFG priority programme 1648 (SPPEXA). A preprint of the paper is available at arXiv:1905.03136.

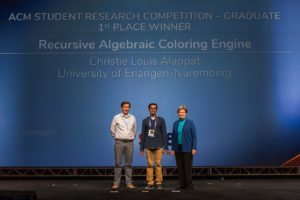

Our PhD student Christie Louis Alappat, by

Our PhD student Christie Louis Alappat, by